By TechBay.News Staff

Product: NotebookLM

Company: Google

Category: AI research assistant / note synthesis tool

What NotebookLM Is (and Isn’t)

NotebookLM is Google’s attempt to turn large language models into something closer to a research companion than a chat toy. Instead of prompting an AI with open-ended questions and hoping it doesn’t hallucinate, NotebookLM is designed to work inside a bounded set of sources you provide—documents, notes, PDFs, transcripts, or links.

That design choice is the product’s defining feature.

NotebookLM is not a general-purpose chatbot. It doesn’t browse the open web on demand. It doesn’t try to sound clever. It reads what you give it, grounds its responses in those materials, and helps you interrogate them.

In other words: it’s less about generating new content and more about making sense of existing information.

How It Works in Practice

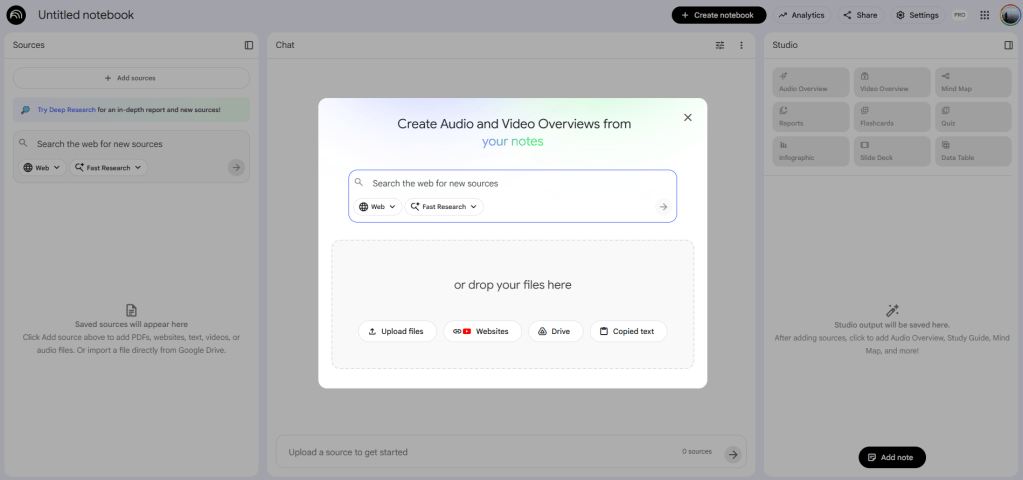

Using NotebookLM starts with building a notebook around a topic. You upload or link source material—policy documents, research papers, meeting notes, court filings, technical specs—and the system ingests them as the knowledge base.

From there, you can:

- Ask questions that are answered only from your sources

- Generate summaries, outlines, and thematic breakdowns

- Compare passages across documents

- Trace where specific claims come from

- Ask for explanations in plainer language

Crucially, NotebookLM shows citations back to the source material. That doesn’t eliminate error, but it changes the posture of the tool from “trust me” to “check me.”

Where NotebookLM Works Well

1. Source-bounded reasoning

This is NotebookLM’s biggest strength. By constraining the model to your materials, it reduces hallucination and keeps answers anchored to reality. For research, analysis, or legal and policy work, this is a meaningful improvement over generic AI chat.

2. Pattern extraction, not content inflation

NotebookLM is good at surfacing themes, contradictions, and gaps across documents—things humans often miss when buried in volume. It’s less interested in filling space and more interested in organizing meaning.

3. Cognitive offloading, not replacement

Used well, it acts like a second set of eyes. It doesn’t replace judgment, but it shortens the distance between raw information and informed understanding.

4. Low friction for complex material

Dense PDFs, long reports, or messy notes become more navigable. Asking “What are the unresolved issues?” or “Where do these documents disagree?” is often faster than manual review.

Where It Falls Short

1. Still dependent on input quality

NotebookLM cannot rescue bad, incomplete, or biased source material. If your documents are flawed, the output will be too—just more coherently.

2. Limited outside context

Because it doesn’t freely incorporate external information, it won’t tell you what’s missing unless you already provided it. That’s a feature for rigor, but a limitation for discovery.

3. Not a collaboration tool (yet)

It’s primarily an individual research environment. Teams expecting shared workflows, versioning, or project management will find it narrow.

4. Requires good questions

The tool rewards thoughtful prompts. Users looking for instant answers without engaging the material may find it underwhelming.

Who This Is Actually For

NotebookLM is best suited for:

- Journalists and researchers working with large document sets

- Lawyers, policy analysts, and advocates reviewing filings or legislation

- Consultants and analysts synthesizing client or market materials

- Students or academics organizing sources for papers

- Anyone doing reading-heavy work who wants help thinking, not writing

It is not ideal for:

- Creative writing or ideation

- Marketing copy generation

- Casual Q&A

- Teams looking for workflow automation

The Strategic Significance

NotebookLM hints at a quieter shift in AI design.

Rather than positioning AI as a universal answer engine, Google is framing it as a decision-support layer—one that sits on top of human-curated information and helps users reason through it.

That matters.

If AI adoption is going to move beyond novelty and into high-stakes domains—law, governance, enterprise decision-making—tools like this are more likely to succeed than open-ended chatbots. They don’t eliminate human judgment; they formalize its inputs.

Bottom Line

NotebookLM doesn’t feel magical. It feels useful.

Its value comes from restraint: limiting scope, grounding responses, and emphasizing comprehension over creativity. For people whose work depends on understanding complex materials accurately, that’s not a drawback—it’s the point.

As an AI product, it’s less flashy than competitors. As a research tool, it’s more serious.

Disclosure: No affiliation or compensation.

Leave a comment